Meron Gribetz of Meta, an augmented reality glasses maker, is asking designers and developers to challenge the status quo of interface design. The foundation for the computer interfaces that we are familiar with today were essentially developed fifty years ago – by SRI and Xerox PARC, who laid down the foundation for the flat, icon and menu based approach we take to computing today.

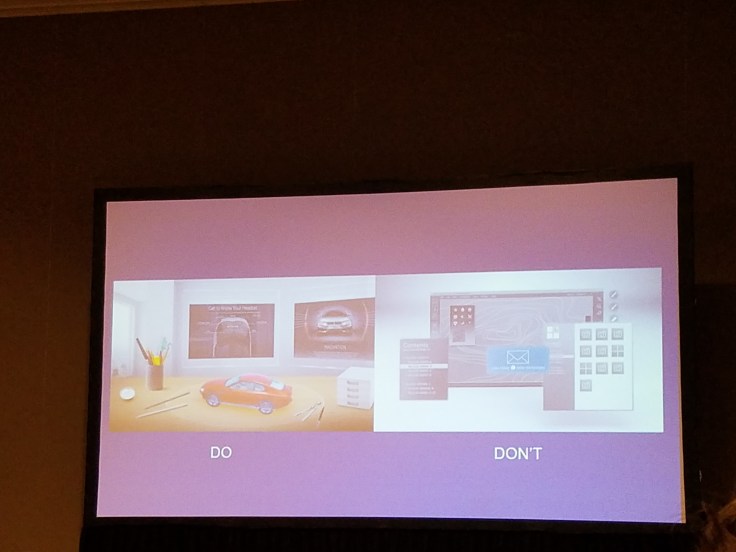

As augmented reality headsets get ready to roll out into the consumer market, Gribetz sees a potential calamity emerging – designers and developers will focus on the interface design tropes of the past, and translate those historical design approaches into an AR world of menus, maps and pop ups exploding in front of our vision. In a SXSW 2017 session focusing on augmented reality for designers and developers, Gribetz colorfully illustrated the clutter and confusion that would arise to taking a traditional menu/icon based approach to interface design, the challenges of an interactive system that demands numerous complex gestures to manage, and the anxiety inducing and toe stubbing potential of using mixed reality to overwrite the world around us, versus a more transparent approach of simply augmenting reality.

Because of all these perceived challenges, META has been working with neuroscientists to understand how the human brain works and beginning with a totally blank canvas, reinventing the principles of interface design to reach a goal of zero learning curve computing for the augmented reality environments of the future.

Gribetz presented 9 principles that form the foundations of designing interfaces for zero learning curve AR:

1 – Spatial Design

AR means that computing is no longer confined to a little flat triangle that needs to be navigated through a hierarchy of menus and icons. Designers should fight the urge to flatten the world in AR, and instead design interfaces where the elements are around us like they would be in the real world, similar to an artists desk. They should be three dimensional and have affordances (an affordance is the possibility of an action on an object or environment – thinks that clue your brain to “pick me up” or “use me like this”). Neuroscience tells us that the human brain has two visual systems, one that is essentially the object recognition portion of our brains, and another that is essentially our visual working memory. Traditional computer interfaces only activate one half of our visual system – Gribetz proposes that we design AR interfaces that activate both visual systems, and tap more deeply into the way our brains functions to make the interfaces more intuitive.

2 – Minimize Abstractions

Flat tools / icons have ambiguity – our brains have to work harder to interpret their meaning and this slows us down and confuses us. By minimizing abstractions and making tools 3D and also having them resemble what they are in real life, we are able to leverage the power of our innate wiring. An eraser should look like a 3D eraser, that you pick up and use the way you would use an eraser in real life.

3 – The World is Your File System

Humans live in a world where we use spatial memory to recall where we have put things. File storage in a traditional computer does not activate our spatial memory, so regardless of how sophisticated our file naming systems are, we are constantly losing files, when we can’t intuitively recall the exact file names. Gribetz proposes that the world is our file system, and that we should store our files in digital furtnire. For example, open a drawer and pull out a digital photo album that resembles a photo album in real life, and if you are looking for a specific photo, you are more likely to recall that its about halfway through the album, and you can flip the pages and find it quickly, because using spatial memory activates more of your neural circuitry.

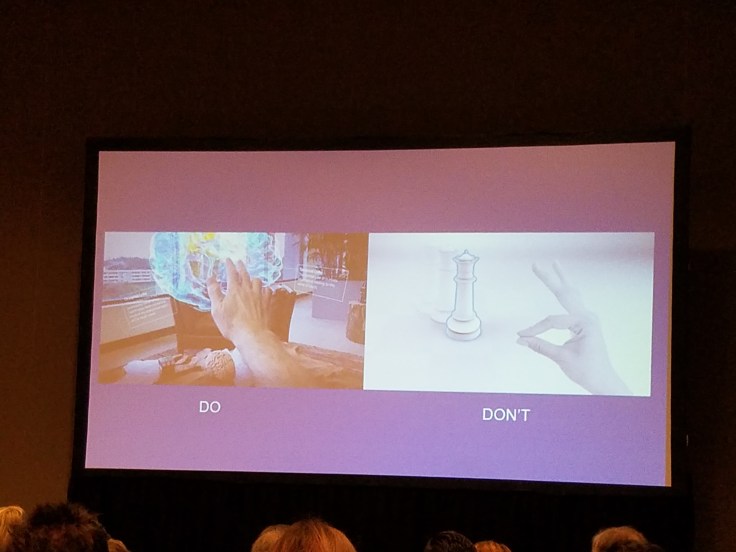

4 – Touch to See

Babies instinctively reach out and touch things. When we want things in the real world we reach out and touch them. Don’t use controllers or complex hand gestures to control augmented reality – use movements that our brains are hardwired for already. Your brain has a spatial depth map that is specifically dedicated for the areas around your hands. By touching objects directly, we can manipulate more dexturouly, and can understand them more deeply. By being able to reach out and take things and manipulate them as we can in the real world, we are making the interface completely intuitive and this allows users to get into their “flow” much more quickly, and sustains and protects that mental flow.

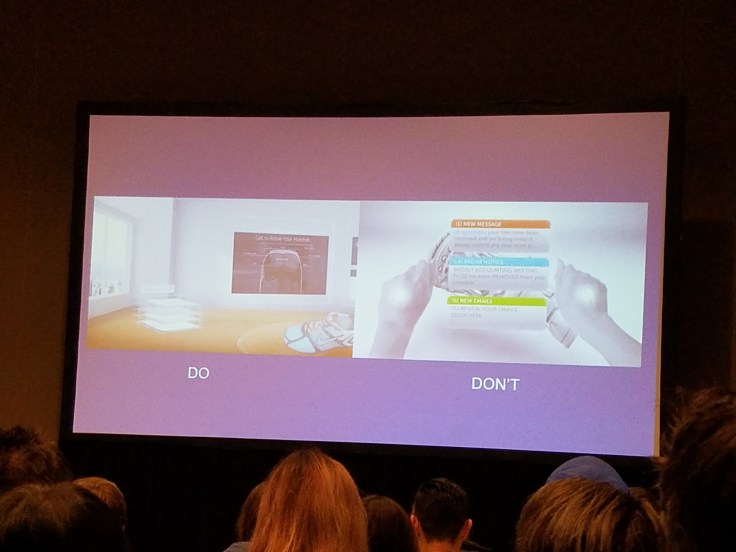

5 – Do Not Disturb

Can you imagine a world where we wake up every morning, and put on our AR glasses, and are immediately bombarded with pop ups? Or you are driving down the street and you get hit by pop ups – you crash! Pop ups are essentially designed to break a users flow. They disrupt what is happening. Rather than allowing users to control the interface, pop ups end up controlling the user. Instead, use a receptacle like a little file rack, or a mail box,a nd allow the user to control the location of the receptacle, to put it in another room, and for the first time in history put the user in control. Give the user the autonomy to protect their mental flow – eliminate flashing red pop ups that activate their flight or fight resposnes, and the notifications from Facebook that someone ate sushi.

6 – Avoid Surprises & Magic Tricks

The form of an object should imply information about its function. If we use a Harry Potter wand that can do 1000 different things, its all very fancy, but our brain has absolutely no intuitive information about what it can use that wand for, and begins shooting off error neurons that what its seeing doesn’t make sense. Instead, simply extend the laws of Newtonian physics, use subtle nuance, and design tools to look like they do what they do. That way it takes fewer cognitive resources to complete a task and is less impeding on our limited attention span. Work with users existing mental map and avoid an ambiguous user interface.

7 – The Holographic Campfire

If two users are working together, never obscure their faces or hands. We have an amazing amount of mental circuitry that gleans huge amounts of information from eye contact and hand movements, and underlies interpersonal communication. Never place clutter around faces or hands and disrupt the connection between people.

8 – Public by Default

Cellphones are little, private rectangles. However, the real world is a public place. If augmented reality is an extension of the real world, we should be able to share what we see harmoniously. In AR, people will have trouble interpreting your actions if they cannot see what you are doing. A private interface is assymetric, and impacts public perception of you. Of course, you should be able to makes things private if you choose to, but designers should begin with the approach of “public by default”.

9 – Augmented Reality, Not Mixed Reality

Mixed reality overwrites and obscures the world around us, by adding to the real world to create a new version of reality. Gribetz advocates for simply augmenting reality, adding subtle nuances to the real world rather than overriding it. Instead of having whales leaping out of gym floors, lets focus on being able to touch a flower in the real world and have information about that flower visible. We don’t want to change reality, we simply want to augment it.

Meta plans to release more information on these principles for designing AR interfaces in the next few weeks. Gribetz argues that now is the time for designers to intercede, and abandon the design interfaces that are 50 year old tropes and instead work to optimize human potential by creating a beautiful and sustainable approach to AR interfaces.

Leave a comment